Want smarter insights in your inbox? Sign up for our weekly newsletters to get only what matters to enterprise AI, data, and security leaders. Subscribe Now

Japanese AI lab Sakana AI has introduced a new technique that allows multiple large language models (LLMs) to cooperate on a single task, effectively creating a “dream team” of AI agents. The method, called Multi-LLM AB-MCTS, enables models to perform trial-and-error and combine their unique strengths to solve problems that are too complex for any individual model.

For enterprises, this approach provides a means to develop more robust and capable AI systems. Instead of being locked into a single provider or model, businesses could dynamically leverage the best aspects of different frontier models, assigning the right AI for the right part of a task to achieve superior results.

The power of collective intelligence

Frontier AI models are evolving rapidly. However, each model has its own distinct strengths and weaknesses derived from its unique training data and architecture. One might excel at coding, while another excels at creative writing. Sakana AI’s researchers argue that these differences are not a bug, but a feature.

“We see these biases and varied aptitudes not as limitations, but as precious resources for creating collective intelligence,” the researchers state in their blog post. They believe that just as humanity’s greatest achievements come from diverse teams, AI systems can also achieve more by working together. “By pooling their intelligence, AI systems can solve problems that are insurmountable for any single model.”

Thinking longer at inference time

Sakana AI’s new algorithm is an “inference-time scaling” technique (also referred to as “test-time scaling”), an area of research that has become very popular in the past year. While most of the focus in AI has been on “training-time scaling” (making models bigger and training them on larger datasets), inference-time scaling improves performance by allocating more computational resources after a model is already trained.

One common approach involves using reinforcement learning to prompt models to generate longer, more detailed chain-of-thought (CoT) sequences, as seen in popular models such as OpenAI o3 and DeepSeek-R1. Another, simpler method is repeated sampling, where the model is given the same prompt multiple times to generate a variety of potential solutions, similar to a brainstorming session. Sakana AI’s work combines and advances these ideas.

“Our framework offers a smarter, more strategic version of Best-of-N (aka repeated sampling),” Takuya Akiba, research scientist at Sakana AI and co-author of the paper, told VentureBeat. “It complements reasoning techniques like long CoT through RL. By dynamically selecting the search strategy and the appropriate LLM, this approach maximizes performance within a limited number of LLM calls, delivering better results on complex tasks.”

How adaptive branching search works

The core of the new method is an algorithm called Adaptive Branching Monte Carlo Tree Search (AB-MCTS). It enables an LLM to effectively perform trial-and-error by intelligently balancing two different search strategies: “searching deeper” and “searching wider.” Searching deeper involves taking a promising answer and repeatedly refining it, while searching wider means generating completely new solutions from scratch. AB-MCTS combines these approaches, allowing the system to improve a good idea but also to pivot and try something new if it hits a dead end or discovers another promising direction.

To accomplish this, the system uses Monte Carlo Tree Search (MCTS), a decision-making algorithm famously used by DeepMind’s AlphaGo. At each step, AB-MCTS uses probability models to decide whether it’s more strategic to refine an existing solution or generate a new one.

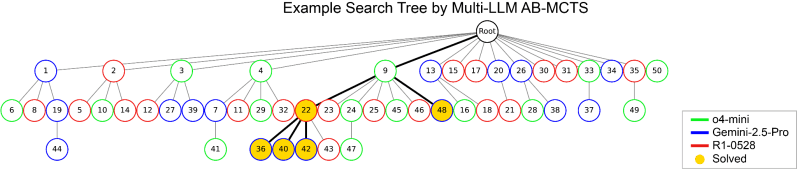

The researchers took this a step further with Multi-LLM AB-MCTS, which not only decides “what” to do (refine vs. generate) but also “which” LLM should do it. At the start of a task, the system doesn’t know which model is best suited for the problem. It begins by trying a balanced mix of available LLMs and, as it progresses, learns which models are more effective, allocating more of the workload to them over time.

Putting the AI ‘dream team’ to the test

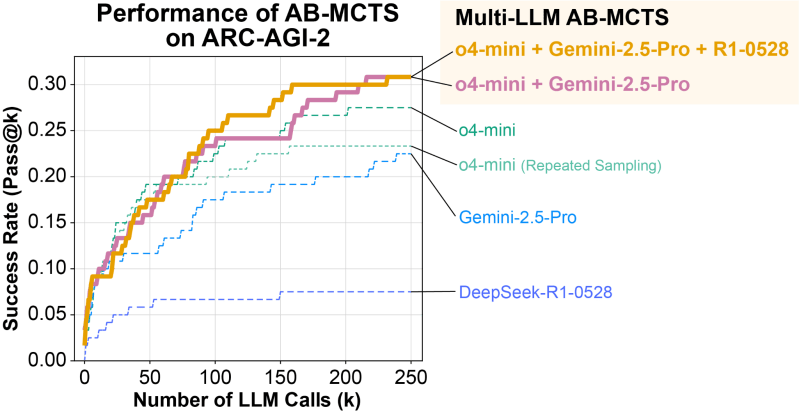

The researchers tested their Multi-LLM AB-MCTS system on the ARC-AGI-2 benchmark. ARC (Abstraction and Reasoning Corpus) is designed to test a human-like ability to solve novel visual reasoning problems, making it notoriously difficult for AI.

The team used a combination of frontier models, including o4-mini, Gemini 2.5 Pro, and DeepSeek-R1.

The collective of models was able to find correct solutions for over 30% of the 120 test problems, a score that significantly outperformed any of the models working alone. The system demonstrated the ability to dynamically assign the best model for a given problem. On tasks where a clear path to a solution existed, the algorithm quickly identified the most effective LLM and used it more frequently.

More impressively, the team observed instances where the models solved problems that were previously impossible for any single one of them. In one case, a solution generated by the o4-mini model was incorrect. However, the system passed this flawed attempt to DeepSeek-R1 and Gemini-2.5 Pro, which were able to analyze the error, correct it, and ultimately produce the right answer.

“This demonstrates that Multi-LLM AB-MCTS can flexibly combine frontier models to solve previously unsolvable problems, pushing the limits of what is achievable by using LLMs as a collective intelligence,” the researchers write.

“In addition to the individual pros and cons of each model, the tendency to hallucinate can vary significantly among them,” Akiba said. “By creating an ensemble with a model that is less likely to hallucinate, it could be possible to achieve the best of both worlds: powerful logical capabilities and strong groundedness. Since hallucination is a major issue in a business context, this approach could be valuable for its mitigation.”

From research to real-world applications

To help developers and businesses apply this technique, Sakana AI has released the underlying algorithm as an open-source framework called TreeQuest, available under an Apache 2.0 license (usable for commercial purposes). TreeQuest provides a flexible API, allowing users to implement Multi-LLM AB-MCTS for their own tasks with custom scoring and logic.

“While we are in the early stages of applying AB-MCTS to specific business-oriented problems, our research reveals significant potential in several areas,” Akiba said.

Beyond the ARC-AGI-2 benchmark, the team was able to successfully apply AB-MCTS to tasks like complex algorithmic coding and improving the accuracy of machine learning models.

“AB-MCTS could also be highly effective for problems that require iterative trial-and-error, such as optimizing performance metrics of existing software,” Akiba said. “For example, it could be used to automatically find ways to improve the response latency of a web service.”

The release of a practical, open-source tool could pave the way for a new class of more powerful and reliable enterprise AI applications.

Source link